Context

Large-scale assessment programs required interactive digital experiences capable of measuring complex skills in authentic contexts. Traditional item formats could not capture multi-step reasoning, tool use, or applied decision-making.

Each task functioned as a self-contained web application while needing to integrate with platform constraints, scoring systems, and telemetry pipelines in a regulated, high-stakes environment.

Role & Scope

As an individual contributor, I designed and delivered 14 full-scale scenario-based assessment tasks, each implemented as an interactive application.

Each task included:

- Storyboard defining the content and user flow through the task

- Custom UI components and multi-state interaction logic

- Embedded tools and task-specific workflows

- Scoring rules and response modeling

- Metadata schemas and API integration

- Telemetry capture for machine scoring and analysis

Approach

I owned the work end-to-end across design, specification, and delivery:

- Collaborated with subject matter experts (SMEs) to translate domain content into interactive, assessable experiences

- Developed and maintained detailed storyboards for each task, guiding design, development, and client review through approval

- Designed user experiences through interaction modeling, storyboarding, and UX design

- Translated assessment intent into structured, machine-scorable interactions

- Authored detailed functional specifications defining system states, transitions, navigation, scoring logic, and edge cases

- Served as product owner through vendor development, managing sprint cycles, clarifications, and change control

- Coordinated QA, platform integration, and user acceptance testing to ensure fidelity, usability, and correctness

This work required tightly coupling interaction design, system behavior, and measurement goals so that student actions could be captured as valid, interpretable evidence.

Results

- Delivered 14 production-grade interactive assessment applications

- Enabled measurement of complex, multi-step skills through simulation-based tasks

- Established consistent patterns for integrating interaction design, scoring logic, and telemetry capture

- Reduced ambiguity in implementation through detailed, system-level specifications

Key Takeaway

Interactive assessment design is fundamentally a systems problem—effective solutions require aligning user interaction, technical implementation, and how evidence of student thinking is captured and scored.

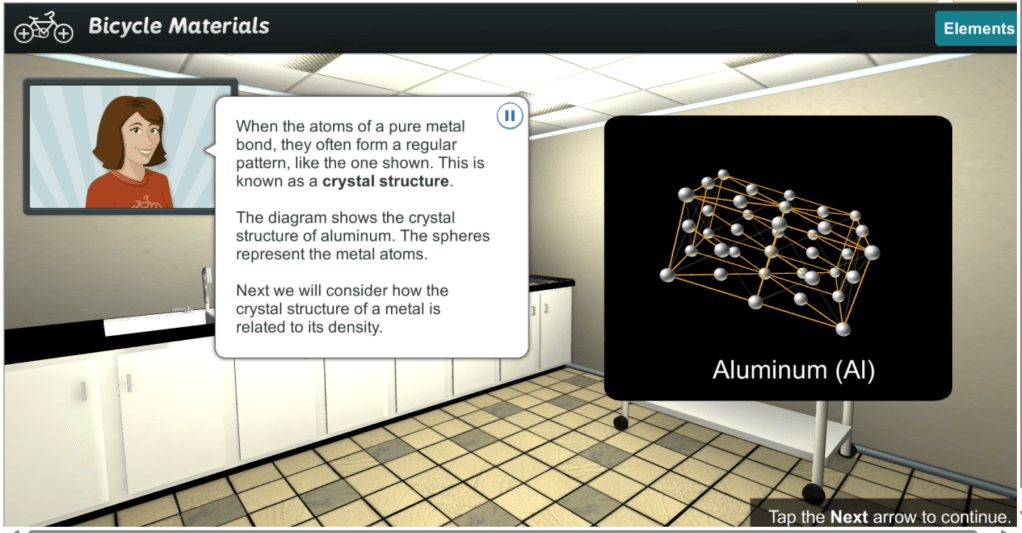

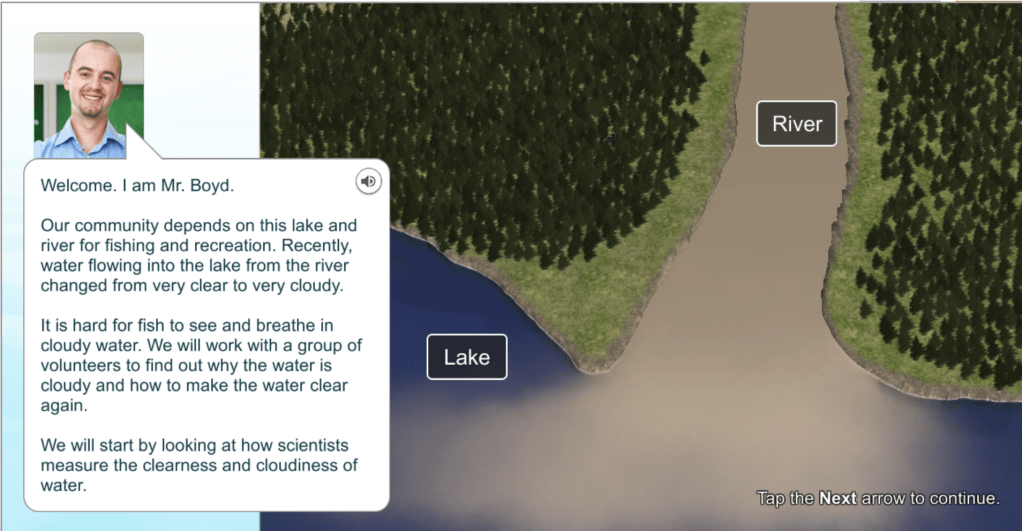

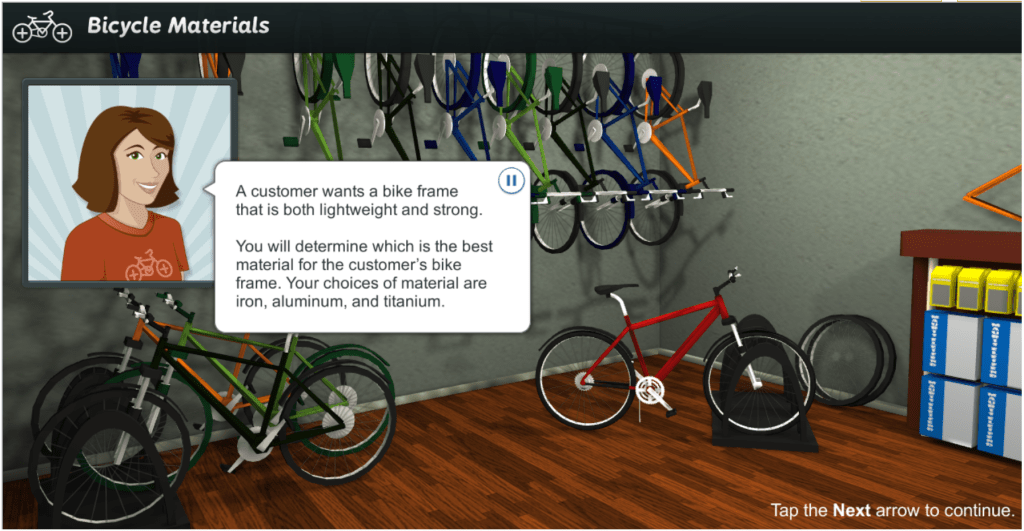

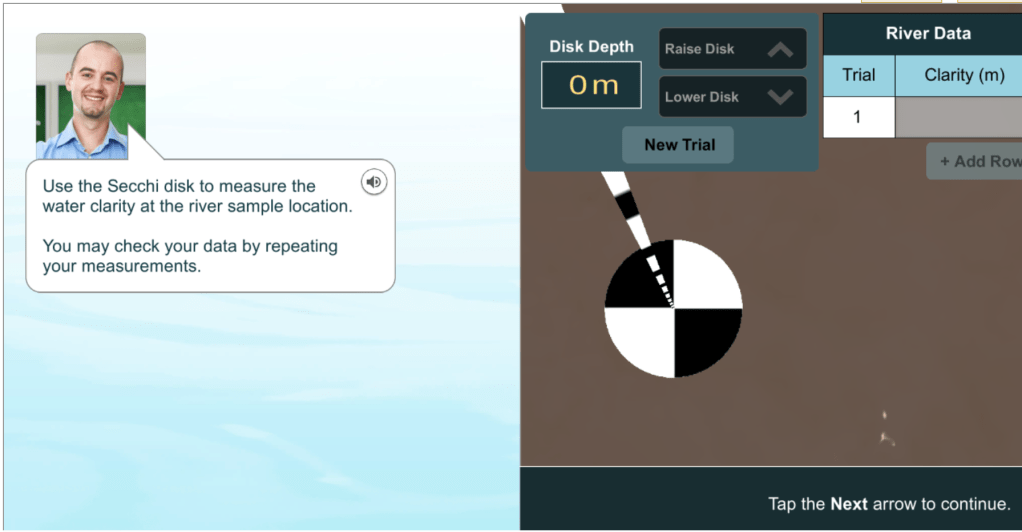

Show and Tell (What We Built)

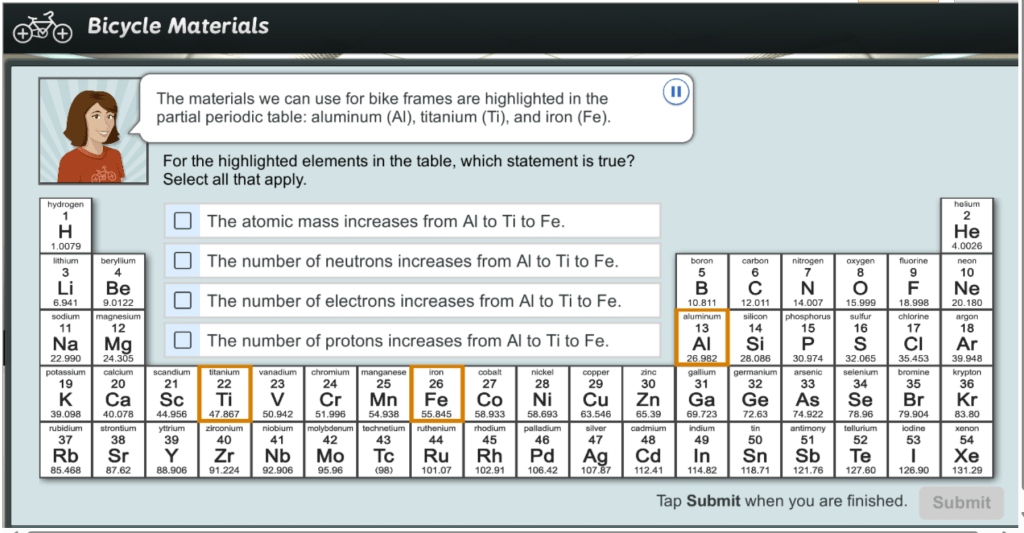

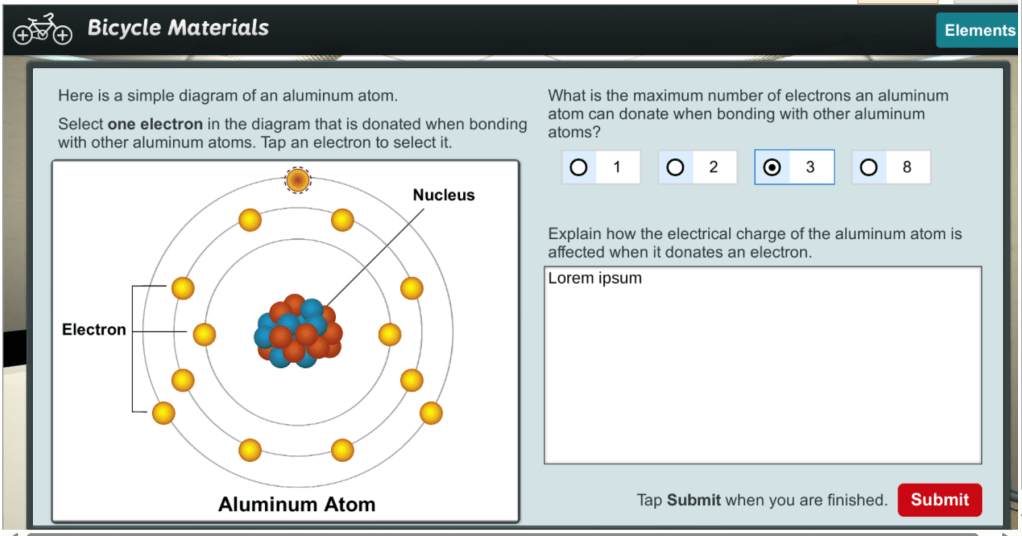

The main type of application I worked on were NAEP Scenario Based Tasks. The examples below are 2 SBTs that I personally worked on and were released to the public: Clear Water and Bicycle Materials. While most SBTs I worked on were from ideation through production release. When I took these two over, they had already been designed and released to pilot with test takers. My work on these two included revising design and content following pilot results and modernizing features and functions, such as improving accessibility.

The early days of SBT (Scenario Based Taks) design were characterized by creative exploration to develop engaging and authentic contexts, designs, and user interface elements to meet assessment measurement goals. These early SBTs had a wide range of presentations.

SBTs begin with establishing an authentic and engaging context for the task.

Test takers would be introduced to the context and guided through an activity solve a problem (engineering) or conduct an investigation (science). This format allowed the test taker to apply their knowledge and skills in an authentic context and allowed the SBT developers (myself as designer and assessment specialists) to develop item content that was more integrated with the larger task.

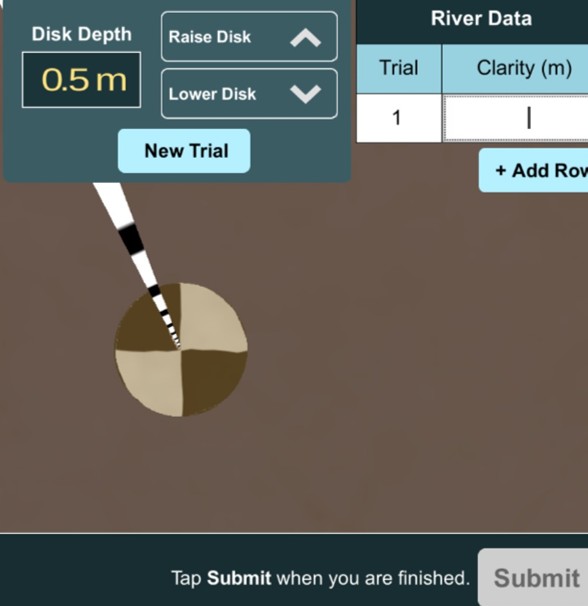

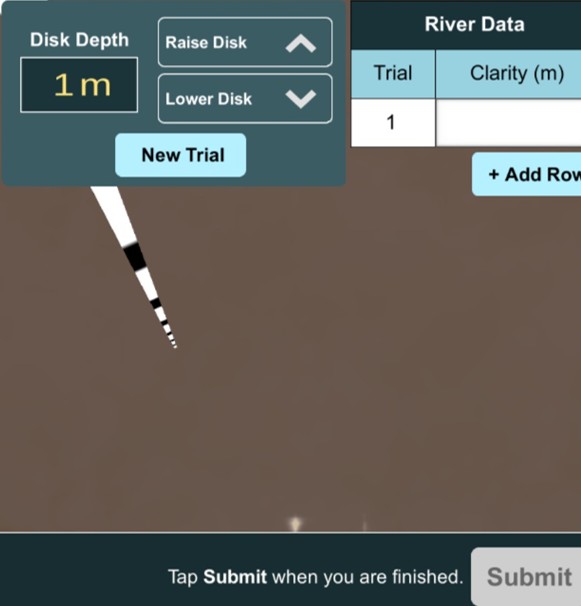

For example, in ‘Clear Water’ students were able to use a simulated apparatus to test the turbidity of water and collect data.

The SBTs also supported novel item compositions. Not all compositions were ideal, but NAEP gathered valuable data from student play-testing and usability studies as well as direct data from test takers during pilot assessment administrations.

While most of my SBT designs are confidential assessment content, this overview captures some of the flavor of the design and development for these first of their type large-scale assessment experiences. I’ll continue expanding this section with detailed case studies as I publish more of my portfolio.